Chapter Eleven: A Love Letter to Gamers — Sega's Sacrifice and Nintendo's Walled Garden

January 31, 2001. Sega held a press conference in Tokyo.

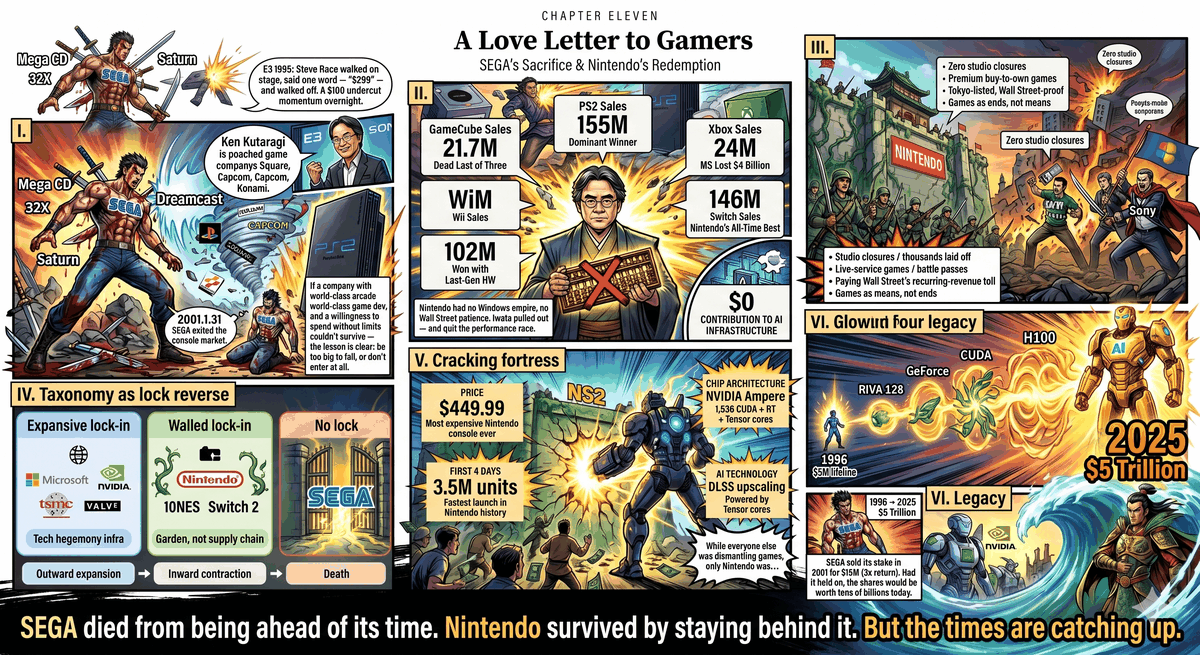

President Shoichiro Irimajiri stood at the podium and announced the discontinuation of the Dreamcast. Sega was exiting the console hardware market. A company that had been building game consoles since the SG-1000 in 1983 — through the glory days of the Mega Drive, the bitter struggle of the Saturn, the final stand of the Dreamcast — was leaving the hardware battlefield forever.

The press conference was short. Irimajiri's words were restrained. But that evening, in the game shops of Akihabara, clerks moved the Dreamcast display cases to the corners. On 2ch forums, die-hard fans wrote eulogies. Someone quoted a line from Shenmue: "いつか必ず、この道を。" (Someday, I will walk this road to its end.)

The company was dead. But what it had done before dying — that $5 million lifeline described in Chapter Four, which kept NVIDIA alive past 1996 and ultimately grew into a $5 trillion AI monopoly — looks staggeringly heavy in retrospect, twenty-five years later.

After Sega fell, only three players remained in the console market: Sony, Microsoft, and Nintendo. The first two marched down the road described in the preceding ten chapters — the compute arms race, platform lock-in, the Wall Street numbers game. The third took an entirely different path.

But before we tell Nintendo's story, we need to finish Sega's autopsy. Because its cause of death was far more complicated than "too good at technology."

I. Autopsy

To understand why Sega died, you first have to understand what Sega was.

Sega was not Nintendo. Nintendo came from toys — hanafuda cards, playing cards, and only then electronic games. Nintendo's DNA was "fun." Its graphics never needed to be the best; they only needed to serve gameplay.

Sega came from arcades. Its roots were in those machines that cost hundreds of thousands of yen each — hydraulic seats, wrap-around screens, sixty frames per second of real-time 3D without a single dropped frame. Sega's internal development team AM2, led by Yu Suzuki, had been doing something since the 1980s that Nintendo had never attempted: pushing both hardware and experience to their absolute limits simultaneously.

1986: OutRun. Super Scaler technology used high-speed sprite scaling to simulate 3D depth — effects that home consoles of the era couldn't even dream of. The hydraulic cabinet tilted with the screen, letting your body feel the centrifugal force of each turn. Sega called this design philosophy taikan — "bodily sensation."

1993: Virtua Fighter. The world's first fully 3D fighting game. Model 1 arcade board, real-time polygon rendering at sixty frames per second. In an era when Street Fighter II had defined the fighting genre in 2D, AM2 leaped straight into 3D — not to show off, but because Suzuki believed three-dimensional space could achieve a depth and authenticity that 2D never could.

1994: Daytona USA. Model 2 board — custom hardware co-developed with GE Aerospace, a full generation faster than any home console. Eight linked cabinets for multiplayer racing, high-speed texture mapping, physically modeled engine roar and tire friction. This wasn't a racing game. It was an immersion device that made you forget you were sitting in an arcade.

Sega never pursued "best graphics" or "best gameplay" in isolation. It pursued both pushed to the limit simultaneously — using the most cutting-edge hardware to create experiences that the body and senses could not refuse. This pursuit didn't exist in Nintendo's DNA. It didn't exist in the spreadsheet logic of Sony and Microsoft that came later. Sega was the closest thing to a craftsman that the platform-holder world has ever seen.

But the craftsman died. Three wounds killed it.

The first wound was self-inflicted. Before the Dreamcast, Sega had launched three pieces of hardware in five years — the Mega CD, the 32X, and the Saturn — each requiring its own game development ecosystem, its own supply chain, its own marketing budget. The Saturn's dual-CPU architecture drove third-party developers mad — only a team like AM2, coding from bare metal, could wring full performance from it. By the time Dreamcast launched, Sega's finances were hemorrhaging and developer trust was evaporating.

The second wound was Sony's killing stroke. At the first E3 in 1995, Sega announced the Saturn was available immediately in North America at $399 — a gamble to seize first-mover advantage. Sony's response is the most famous single blow in gaming history: SCEA president Steve Race walked onstage, dropped his prepared remarks, said one word — "$299" — and walked off. A hundred-dollar price gap killed Saturn's momentum in North America in a single second. But Sony's killing move went beyond price. Ken Kutaragi's team had been touring game studios worldwide since 1994, carrying PlayStation 3D demos, promising low-cost CD manufacturing (an order of magnitude cheaper than cartridges) and a streamlined approval process. The result: Square pulled Final Fantasy VII from Nintendo's camp and gave it to PlayStation. Capcom and Konami followed. Sony didn't steal market share — it stole third-party exclusives. In an industry where a console lives or dies by its software lineup, losing third parties means losing everything.

The third wound was the PS2. March 2000. The launch covered in Chapter Three — Kutaragi standing onstage with Emotion Engine floating-point specs, positioning the PS2 as "the home computer of the twenty-first century." PS2 sold over ten million units in its first year. Dreamcast went from cliff-edge sales decline to discontinuation in under two years.

Three wounds combined: self-inflicted hardware fragmentation consuming finances and developer trust; Sony's tactical marketing stealing the third-party lineup; PS2's overwhelming installed base sealing the last exit.

Sega's leadership did a calculation. Continue making hardware, lose hundreds of millions per year. Exit hardware and become a pure software company — at least survive.

Yu Suzuki built Shenmue on the Dreamcast — development cost was never officially confirmed, but industry estimates range from $47 million to $70 million. Shenmue had a dynamic day-night weather system, NPCs with independent schedules, convenience stores you could walk into, arcade machines you could play. In an era when 3D games were just learning to walk, Suzuki built an entire virtual town. The game was the ultimate expression of Sega's taikan philosophy — and its final elegy.

Sega's exit from the console hardware market sent a signal across the global gaming industry: If even Sega — a company with top-tier arcade technology, top-tier game development capability, and a willingness to pursue perfection regardless of cost — couldn't hold on, then the lesson for anyone thinking about making console hardware was simple: either be too big to fail, or don't enter.

II. Iwata's Arithmetic

May 31, 2002. Satoru Iwata became Nintendo's fourth president. Forty-two years old. His predecessor Hiroshi Yamauchi had retired and handpicked this programmer from HAL Laboratory — not a businessman, not a marketer — to lead a century-old company.

When Iwata took over, Nintendo was being taught a lesson by GameCube's failure. GameCube carried a custom IBM PowerPC CPU codenamed "Gekko" and an ATI GPU codenamed "Flipper" — performance roughly on par with the PS2 and Xbox. Nintendo had earnestly fought a head-on specs war against Sony and Microsoft.

The result: GameCube sold 21.74 million units worldwide. PS2 sold 155 million. Xbox sold 24 million. GameCube finished last among the three — and not by a small margin.

Iwata was not the kind of person to be paralyzed by failure. He was the kind of person who reached for a calculator.

He did the math.

The Emotion Engine's R&D cost ran into the hundreds of millions. Sony lost money on every PS2 sold in the early period, clawing it back through software licensing fees and the added value of a DVD player. Each Xbox lost over $100, and Microsoft absorbed $4 billion in losses — because its goal wasn't profit, it was blocking Sony (the story of Chapter Three).

Both rivals were trading losses for market share. And the cost of this war would only climb with every new generation. GPU performance doubled every two years. R&D costs doubled every two years. But game prices couldn't go up — consumers' psychological anchor for a game sat between $50 and $60, unchanged for a decade.

Iwata didn't need other companies' corpses to teach him arithmetic. GameCube's own failure was clear enough — Nintendo had fought its rivals spec for spec and sold the fewest units. And the industry's cost structure was right there on the page: If Nintendo kept competing on compute power against Sony and Microsoft, it would either be crushed by costs or have to subsidize losses from a larger empire — but Nintendo had no Windows empire, and it had no patience from Wall Street. Sega's exit was corroborating evidence, not the cause. The real cause was the numbers on the calculator.

He made a decision that nearly every gaming outlet mocked at the time.

Exit the performance race.

III. The Cost of Not Fighting

November 19, 2006 — two days after PS3 — the Wii launched in North America. $249.

The Wii's hardware specs were treated as a joke in the gaming community. Its CPU was IBM's Broadway — essentially an overclocked version of GameCube's Gekko. Its GPU was ATI's Hollywood — an upgrade of GameCube's Flipper. The entire machine's performance was roughly a quarter to a third of the PS3 and Xbox 360.

Sony's PS3 used the Cell Broadband Engine — a monster chip jointly developed by IBM, Sony, and Toshiba at an R&D cost exceeding $3 billion. Microsoft's Xbox 360 ran IBM's triple-core Xenon CPU paired with ATI's Xenos GPU — among the most advanced consumer graphics hardware of 2005.

The Wii was running hardware from two generations ago.

But the Wii did something the PS3 and Xbox 360 couldn't: it booted in two seconds and you could play. Its motion controller let a seventy-year-old grandmother play tennis. Its price point required no parental hesitation.

Wii lifetime sales: 101.63 million. PS3: 87.4 million. Xbox 360: 85.8 million.

Iwata won.

But we need to stop here and ask the question this book's readers should be in the habit of asking by now: What was the cost?

The cost: Nintendo perfectly missed every piece of foundational technology accumulation on the road to AI supremacy.

The Wii pushed no advanced semiconductor processes. Its chip used 90nm — 90nm in 2006, the year Intel was already ramping 65nm production. The Wii's GPU had no programmable shader pipeline — the architectural innovation described in Chapter Seven that enabled NVIDIA to transform gaming GPUs into general-purpose compute platforms. The Wii was completely absent.

The Wii contributed no large-die yield data to TSMC. It paid not a single cent of R&D tax into the CUDA ecosystem. Its existence, on the invisible supply chain from gaming to AI described in Chapters Seven and Eight, was a zero.

Nintendo did not participate in forging the foundational infrastructure of AI supremacy. It withdrew from the arms race, preserved its finances, and in doing so permanently disconnected from the front line of humanity's push against technological limits.

This is not a moral judgment. It is a structural observation. Nintendo's choice let it survive — and surviving let it do something that, in the context of this book, is far more interesting.

IV. The Island

While every other gaming giant was doing the same thing, Nintendo was doing the opposite.

Chapter Ten dissected that thing in detail: Microsoft gutting Xbox's studios and redirecting resources to AI infrastructure. Sony, under Jim Ryan's direction, forcing first-party studios to pivot to live-service games, shutting down Japan Studio, spending $200 million on a game — Concord — that lasted fourteen days. Both companies followed the same logic: convert one-time transactions into recurring revenue. Pay Wall Street its protection money.

Nintendo didn't pay that protection money.

The reason was simple — Nintendo is not a U.S.-listed company. It trades on the Tokyo Stock Exchange, with a shareholder base dominated by Japanese institutional investors and long-term holders. Wall Street's quarterly revenue pressure, the American public company's "recurring revenue obsession" — these carry far less weight in Nintendo's boardroom than in Microsoft's or Sony's.

March 3, 2017. The Nintendo Switch launched. $299.

The Switch's heart was NVIDIA's Tegra X1 — a mobile chip released in 2015. 20nm process. 256 CUDA cores. On the gaming hardware spectrum of 2017, its performance was roughly a quarter to a third of the PS4.

Once again, the gaming press's first reaction was mockery.

Once again, Nintendo won.

Switch cumulative sales exceeded 146 million units — surpassing the Wii to become the best-selling console in Nintendo's history. The Legend of Zelda: Breath of the Wild. Animal Crossing. Mario Kart 8. These games didn't need ray tracing, didn't need 4K textures, didn't need 120 frames per second. They needed to be fun.

And throughout the Switch's entire lifecycle — 2017 to 2024 — Nintendo stubbornly did several things that Microsoft and Sony did not:

Its first-party games were sold as complete purchases. No season passes. No battle passes. No "pay ¥1,000 to unlock a limited-edition skin." You paid $60 for Breath of the Wild, and you got a complete game.

It didn't close a single first-party studio. Didn't lay off thousands. Didn't cancel in-progress projects to dress up the balance sheet.

It didn't force its studios to make live-service games. No Jim Ryan–style company-wide pivot mandate.

While Microsoft and Sony treated players as ATMs to subsidize R&D costs and treated studios as assets to be dismantled and reassembled at will, Nintendo did something that looked stupid — it treated players as customers.

But here we need to correct an illusion that might be forming: Nintendo is not an "open" company. Far from it.

In 1985 — a decade before DirectX, two decades before CUDA — Nintendo embedded a chip called 10NES in every licensed NES cartridge. When the console powered on, it performed a handshake authentication with the cartridge chip. Unauthorized cartridges got a black screen. This was the first hardware-level lock in the history of the gaming industry. The accompanying licensing regime was draconian: third-party developers faced annual title limits, mandatory exclusivity periods, and cartridges manufactured exclusively by Nintendo. From the NES to the Switch 2, every generation of Nintendo hardware has had its own proprietary cartridge or card format, its own authentication mechanism, its own closed developer program. From the NES through the 3DS, there was also hardware-level region locking.

Nintendo built the most stringent lock in the history of the gaming industry. But this lock had one characteristic that made it fundamentally different from every lock described in the preceding ten chapters: It never spread outward.

10NES didn't affect PCs. The Switch's closed ecosystem doesn't affect Steam. Nintendo never attempted to make its own technology the industry standard, never entered the DirectX vs. Vulkan standards wars. Its stance has never wavered: enter my garden, play by my rules. Don't want to come in? I don't care.

In April 2026, someone got Steam running on a hacked original Switch — Valve's Proton had just added ARM64 support, and the Switch happened to use an ARM chip. Nintendo's response wasn't openness. It was fortifying the wall: the Switch 2 user agreement explicitly states that installing unauthorized software may result in permanent disabling of the entire console.

Forty years ago, 10NES made unauthorized cartridges show a black screen. Forty years later, the countermeasure upgraded to account bans and remote bricking. The tools changed. The logic hasn't changed by a single word.

Microsoft's DirectX is a weapon — a weapon for conquering the world outside. Nintendo's lock is a castle wall — for protecting the garden within. Both are locks. But one spreads outward. The other contracts inward.

V. NS2: A Crack in the Island

But the story doesn't end here. Because on June 5, 2025, something happened that cracked a fissure in the "Nintendo doesn't participate in the arms race" narrative.

The Nintendo Switch 2 launched. $449.99.

The most expensive console in Nintendo's history. 50% more than the original Switch. Nearly double the Wii's $249 in 2006.

More noteworthy was the chip inside.

The Switch 2's heart is NVIDIA's custom Tegra T239 — codenamed Drake. This is not a chip born from "withered technology." It's based on NVIDIA's Ampere architecture — the same architectural generation as the RTX 3080. 1,536 CUDA cores. RT cores for real-time ray tracing. Tensor cores — NVIDIA's dedicated AI compute units. Die area of 207 square millimeters — nearly twice the original Switch's Tegra X1. 12GB LPDDR5X memory.

The chip's most critical feature is DLSS — Deep Learning Super Sampling. NVIDIA's AI upscaling technology. It allows the Switch 2 to render games at a lower native resolution, then use the Tensor cores' AI computation to "upscale" the image to 1080p or even 4K.

Inside Nintendo's new console, an NVIDIA AI chip is running.

BOM (bill of materials) estimates range from $330 to $400. If accurate, this means that Nintendo — a company whose iron rule has always been "hardware must be profitable" — may be operating on razor-thin margins or even losses on the Switch 2.

The T239 chip is fabricated by Samsung Foundry using Samsung's 8nm process (technically a derivative of its 10nm node) — the same process node used for NVIDIA's RTX 3090. Not TSMC.

Here a subtle but important fact emerges: Nintendo's console chip orders did not enter TSMC's capacity pool. Chapters Eight and Ten emphasized that the long-term SoC orders from PS5 and Xbox Series X serve as ballast in TSMC's capacity planning. The Switch 2's orders went to Samsung — meaning that Nintendo's hardware existence is, once again, a zero on the "console → TSMC → AI chip" supply chain described in Chapter Eight. Even as it finally joined the compute race, it still hasn't stepped onto that track.

But the Switch 2 did something else.

In its first four days, it sold 3.5 million units worldwide. The fastest launch in Nintendo's history. In 2025 — the year Microsoft's Xbox was being dismantled, the year Sony's first-party studios were drowning in the wreckage of live-service failures, the year the global gaming hardware market was supposedly shrinking — a $450 console, powered not by compute specs, not by an AI narrative, not by a recurring-revenue pitch for Wall Street, but by "this machine has Mario and Zelda on it," sold 3.5 million in four days.

While everyone else was dismantling games, only Nintendo was still making them. And consumers voted with their wallets for the one that was still making games.

VI. Closing Arguments

In the preceding ten chapters, the pattern appeared eight times. Each variation took a different form — offensive, defensive, accidental, bidirectional lock-in — but the core logic was the same: attract users with convenience, lock them in through dependency, turn users into appendages of the platform. And every lock shared one characteristic: it spread outward, penetrating platform boundaries, affecting the world beyond.

In this chapter, the pattern inverted.

Sega didn't build a lock — it never lived long enough to lock down an ecosystem. Dreamcast's online service SegaNet had the embryo of lock-in, but PS2 killed it before it could take shape. Sega's exit was not a choice. It was a sentence.

Nintendo built a lock — the earliest and most severe in gaming history. 10NES predated DirectX by a decade. But its lock was walled-garden type: boundaries ending at its own platform, never spreading outward, resetting to zero with each console generation. No one in 2025 is locked anywhere because of 1985's 10NES.

Three types of lock. Three outcomes.

Expansive lock-in — Microsoft, NVIDIA, TSMC, Valve — became the infrastructure of tech hegemony. Costs borne downstream. Deferred bills arriving two or three decades later.

Walled-garden lock-in — Nintendo — survived for forty years, but remained irrelevant to the mainline of tech hegemony. A garden, not a supply chain.

No lock — Sega — death.

Nintendo chose. It chose not to participate in the compute arms race, from Wii to Switch, for eighteen years. It chose to draw the boundary of its lock at its own walls, never attempting to lock down the world beyond. That choice has two sides.

One side is cost: Nintendo is absent from Chapter Seven's CUDA supply chain, absent from Chapter Eight's TSMC yield-testing crucible, absent from Chapter Nine's x86 vs. ARM architecture war. Its contribution to the foundational infrastructure of the AI revolution is zero. From the perspective of technology history, Nintendo's eighteen years have been a brilliant spectation.

The other side is reward: Nintendo is the only company among all the characters in this book that, in 2025, still treats games as an end rather than a means. Microsoft treated games as a defensive weapon for Windows — used, then dismantled. Sony treated games as a revenue pipeline — reconfigured whenever the form didn't fit. NVIDIA treated gamers as R&D tax payers — once CUDA was fed, pivot to AI. TSMC treated gaming chips as stress tests for advanced nodes. Every company was exploiting games. Only Nintendo was still making them.

But the Switch 2 has broken something. $449. NVIDIA Ampere. DLSS. Tensor cores. For the first time, Nintendo put AI technology inside its console — not to do AI, but to let a handheld produce near-4K visuals. It's paying higher hardware costs now. It's drifting further from "withered technology."

Nintendo's island is being eroded by the tide. Not because Nintendo has changed — but because the hardware cost of "making a fun game console" in 2025 no longer allows anyone to stay independent on the cheap.

Sega died by being ahead of its time. Nintendo survived by being behind it. But the times are catching up.

VII. The Spoils Are Still Being Divided

In 2001, before exiting the console hardware market, Sega sold its NVIDIA shares. Bought for $5 million. Sold for $15 million. A triple return.

In 2025, NVIDIA's market capitalization exceeded $5 trillion. That original $5 million investment, had it been held, would be worth tens of billions of dollars.

Sega didn't wait for that day. It couldn't afford to.

And Shoichiro Irimajiri — the man who, in 1996, made a decision that violated every business textbook and threw the last life preserver to NVIDIA — disappeared from public view after stepping down as Sega's president in 2000.

Irimajiri never knew what he had done. He didn't know that $5 million would become the RIVA 128, then GeForce, then CUDA, then the H100, then a chokehold on the global AI industry. He just thought a young man deserved another chance.

Sega is the purest character in this book. No conspiracies, no lock-in, no Wall Street numbers games. It pushed the frontier to make better games, gave generously out of principle, and exited to survive. Every one of its decisions was the decision a game maker would make — not a platform builder.

And Nintendo — the company that survived in the same market after Sega fell — is now facing a question Sega never lived long enough to confront: As the cost of maintaining an island keeps rising, how long can you remain independent?

The Switch 2's $449 is already Nintendo's first concession to reality. What comes next? Next-gen consoles on TSMC's process? Joining the PS6 and next Xbox in a full compute arms race? Using DLSS 4.0 AI upscaling while still insisting on complete, one-time-purchase games?

No one knows.

But one thing is certain. In the forty years recorded by this book, two companies proved something: you can refuse to participate in expansive platform lock-in, you can refuse to participate in the monopoly race, you can even refuse to participate in building the foundational infrastructure of AI hegemony.

The cost is that you will never become a $5 trillion giant.

The reward is that the thing you make is still a game.

That is the question for the final chapter: after this forty-year sprint, how much are those four words — "still a game" — actually worth?