Chapter 4: Sega's Five-Million-Dollar Mercy

In 2025, a machine learning engineer complained on his company's Slack: training a mid-sized language model requires eight NVIDIA H100s running for three weeks. One H100 costs over $30,000. His company waited in line for six months just to get the shipment. Every company in the world that wants to do AI is waiting in that exact same line.

He writes his models in PyTorch. Beneath PyTorch is CUDA. CUDA only runs on NVIDIA GPUs. It's not that he hasn't tried AMD's ROCm—the documentation is incomplete, the community is dead, and the ecosystem is barren. It's not that he hasn't heard of Google's TPU—but that's Google's own hardware; outsiders can rent the compute, but they can't get the chips.

He knows very well in his heart: the entire AI industry is being choked by the throat by a single company. But he doesn't find this surprising. NVIDIA has been making GPUs for thirty years, and its technical accumulation is bottomless—this kind of monopoly is built on time and capability; it's perfectly natural.

No, it isn't.

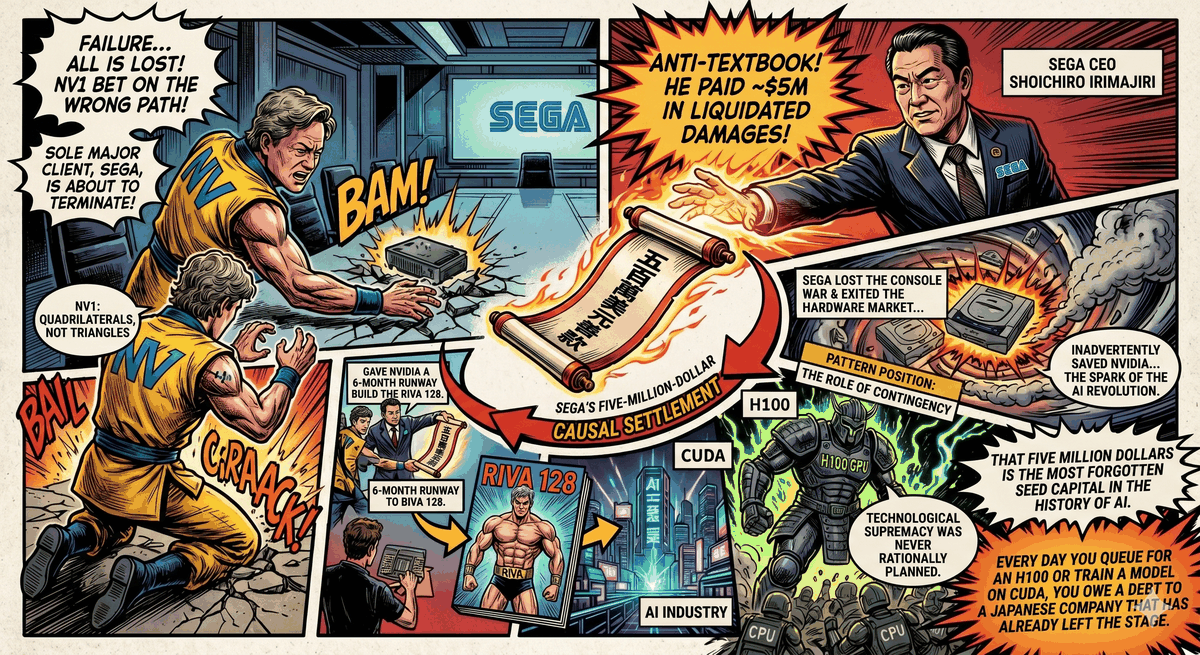

NVIDIA almost ceased to exist in 1996.

That year, it had less than six months of cash left. Its core technical roadmap had been proven to be a dead end. Its only major client was preparing to terminate their contract. According to the standard Silicon Valley script, it should have quietly disappeared that year, becoming just another name among the dozens of graphics card companies that perished in the mid-nineties.

It didn't disappear. Because a Japanese man whose own company was also dying did something that violated every business textbook.

I. Motive for the Crime

In January 1993, three engineers sat down in a Denny's diner in San Jose, California, and decided to found a company. Thirty-year-old Jensen Huang was the youngest among them. He had been at both LSI Logic and AMD, doing chip design as well as technical marketing—this kind of dual-role experience spanning engineering and business wasn't unheard of in Silicon Valley at the time, but it wasn't exactly common either. The other two co-founders, Chris Malachowsky and Curtis Priem, were both hardware engineers from Sun Microsystems.

What they wanted to do was, in today's language, very simple: make a graphics card that could make PC game visuals look beautiful. In 1993, 3D graphics on the PC was still a wasteland. Carmack, mentioned in the previous chapter, was using pure CPU power to brute-force render every pixel of Doom; consumer-grade 3D acceleration hardware was virtually non-existent in the market. The opportunity Jensen Huang saw was this: if they could make a chip specifically for processing 3D calculations, game visuals would take a qualitative leap, and the number of players willing to pay for this was exploding.

But at that diner table, Jensen Huang made a decision—a decision that almost buried the entire company.

He chose a technical path completely different from everyone else's.

II. Forging the Weapon — The Wrong One

In 1995, NVIDIA launched its first product: the NV1.

The NV1's design ambition was immense. It wasn't just a graphics card—it integrated 2D graphics, 3D rendering, audio processing, and even a port for the Sega Saturn controller. One card replacing three. To consumers, this sounded beautiful.

But the NV1 had a fundamental problem hidden deep within its 3D rendering engine.

At the time, all 3D graphics systems—OpenGL, the upcoming Direct3D, Hollywood workstations, and every 3D game in development—used the exact same basic unit: the triangle. Slice the surface of any 3D object into enough triangles, map a texture to each triangle, stitch them together, and you have a passable model. The triangle was the universal language of the entire 3D graphics industry; from academic papers to hardware circuits, everyone was designing around triangles.

NVIDIA didn't use triangles.

The technical architecture led by Curtis Priem chose a method called "quadratic texture mapping"—replacing triangles with quadrilaterals, and then using mathematical curves to bend the surfaces of the quadrilaterals. In theory, this method could draw smoother curved surfaces using fewer geometric units. A sphere might require hundreds of triangles to construct; theoretically, a quadrilateral system could define it with just four.

From a purely mathematical perspective, quadrilaterals have their elegance. But engineering is not math.

The problem was: no software was written for quadrilaterals. No 3D modeling tools outputted quadrilaterals. No game engines supported quadrilaterals. Even more fatally, when Microsoft officially released the Direct3D specification in 1996, it explicitly mandated—only triangles are supported.

Overnight, NVIDIA's entire technical architecture became an isolated island.

The NV1 failed miserably in the market. It was too expensive, its audio quality was mediocre, its 2D speed was inferior to dedicated 2D graphics cards, and its only killer feature—3D acceleration—was almost unsupported by games because it was incompatible with all standards. The only 3D games that could run on the NV1 were a few old titles ported from the Sega Saturn—because the Saturn also happened to render using quadrilaterals.

This was the foreshadowing of Sega's entry.

III. A Contract Destined to Fail

The relationship between Sega and NVIDIA began with those few Saturn game ports on the NV1. The NV1's quadrilateral architecture was similar to the Saturn's rendering method, making porting relatively easy—this was a unique selling point in the PC market of 1995, even if it didn't sell well.

But Sega saw another possibility. It was planning its next-generation console—internally codenamed including "Saturn V08," the predecessor to what would later become the Dreamcast. Sega needed a graphics processing chip. NVIDIA had proven its ability to design media acceleration chips, and the quadrilateral architecture was at least compatible with Sega's past hardware DNA.

In mid-1995, Sega signed a contract with NVIDIA: NVIDIA would develop the graphics chip for Sega's next-generation console, codenamed NV2.

NV2 continued the NV1's quadrilateral path.

This decision was not entirely unreasonable at the time. In 1995, the standard war between triangles and quadrilaterals was not yet completely settled. Direct3D was still in its first version, OpenGL primarily ran on workstations, and 3D acceleration in the PC gaming market was just getting started. Priem and Huang believed that if the NV2 could succeed on the Dreamcast, quadrilaterals had a chance to become a standard parallel to triangles.

But they underestimated one thing: Microsoft's speed.

In 1996, Direct3D entered its rapid iteration phase. Every new version strengthened the feature set for triangle rendering. More importantly, Microsoft controlled Windows—the previous chapter already explained how lethal this card is. All graphics card manufacturers who wanted to make PC games had to support Direct3D, and Direct3D only spoke the language of triangles. 3dfx's Voodoo, ATI's Rage, Matrox, Rendition—the entire market was rotating around triangles as its axis.

And NVIDIA was still trapped on the island of quadrilaterals, holding a contract designed for quadrilaterals.

The development of the NV2 lasted over a year. Prototype chips were sent to Sega for demonstration. The demonstration failed. Inside Sega—especially the arcade division AM2—voices began loudly opposing the quadrilateral path, demanding a switch to triangles. Inside NVIDIA, arguments among the co-founders were also heating up. Priem insisted that quadrilaterals had a technical advantage; Huang began to waver.

By mid-1996, Jensen Huang made a painful judgment.

Continue making the NV2, deliver a quadrilateral chip, and fulfill the contract—but this chip won't be compatible with Direct3D, effectively disconnecting it from the entire PC ecosystem. NVIDIA will have revenue, but no future.

Or, abandon the NV2, admit the technical roadmap was a mistake, and pivot immediately to triangles—but what about the contract? What about Sega's money? The company's remaining cash won't last six months.

Jensen Huang later recalled this moment on multiple occasions. His exact words were: "Whichever path we took, we were going to go out of business."

IV. A Phone Call That Shouldn't Have Been Made

Jensen Huang flew to meet Shoichiro Irimajiri.

Shoichiro Irimajiri was the CEO of Sega of America at the time. His resume was much thicker than most gaming industry executives—in his early years, he worked as an engineer at Honda Motors, participated in the development of F1 racing engines, later moved into management, and was ultimately poached by Sega. He was the kind of person rarely seen in corporations: an executive who had gotten his hands dirty. He had seen engines blow cylinders, and he had seen product lines get axed. He understood technical risk not because he had read case studies, but because he had personally built things that exploded.

Huang didn't bring a progress report. He brought a sentence that almost no one in Silicon Valley dares to say to a client—

We cannot build what you want.

The NV2's technical roadmap was a dead end. Quadrilaterals were not going to become the industry standard, and continuing would only waste both Sega's and NVIDIA's time. NVIDIA had to abandon this path and immediately pivot to triangles, or the company would die.

Then he said something even more unbelievable—

But we need you to pay us.

NVIDIA had failed to deliver the product required by the contract. According to normal business logic, Sega should have terminated the contract, demanded the money back, and perhaps even sued for damages. NVIDIA was the first to breach the contract; Sega had a hundred reasons not to pay a single cent.

And Sega itself wasn't exactly a wealthy company. The Saturn was being crushed globally by the PlayStation, barely holding on in the Japanese market, and almost completely defeated in the West. Every cent of cash Sega had should have been used to prepare for the launch of the Dreamcast—finding a new chip supplier, signing new game development contracts, doing marketing. Paying a supplier who couldn't deliver the goods is the wrong answer in any management textbook.

Shoichiro Irimajiri listened to what Jensen Huang had to say.

He chose the answer that wasn't in the textbook.

Sega invested $5 million into NVIDIA. Not paying a penalty fee, but an investment—buying shares in NVIDIA. The reason Irimajiri was later quoted as giving was simple to the point of naive: he thought Jensen Huang was a trustworthy young man.

Huang's subsequent recollection was: "His understanding and generosity gave us six months to live."

Six months.

V. Six Months of Rebirth

After getting Sega's money, Jensen Huang did three things.

First, he slashed the company's headcount from over a hundred people to thirty-five. Everyone who stayed was an engineer. No marketing, no administration, no roles unrelated to "getting the chip designed."

Second, he terminated all development related to quadrilaterals. The NV2's code, the NV1's architecture, Priem's two-plus years of hard work—everything went to zero. The new chip's codename was NV3. The architecture started from the very first line of code, speaking only the language of triangles, born solely for Direct3D.

Third, he used the remaining money to buy a hardware emulator.

This emulator was the most crucial move in the entire gamble. The traditional chip design process is: design the circuit, send it to the foundry for manufacturing, get the finished product back for testing, find bugs, modify the design, and send it back for manufacturing—one cycle takes at least one to two years. NVIDIA didn't have two years. It didn't even have one year. Jensen Huang used the hardware emulator to run the entire chip's tests in a software environment, fixing all the bugs first before sending it to manufacturing—"measure twice, cut once." The entire design cycle was compressed to about seven months.

In April 1997, the NV3 was officially announced. It had a new name: RIVA 128.

The RIVA 128 was not a great graphics card. Its drivers were notoriously rough in the early days, its OpenGL support was incomplete, and its 2D image quality was merely passable. But it achieved one thing the NV1 never did—it ran Direct3D, and it ran it fast and stable.

128-bit memory bandwidth, a 100 MHz core clock, 1.5 million triangles per second—these numbers weren't the absolute best in the PC graphics card market of 1997, but they were enough to enter the top tier. More importantly, its price was cheaper than 3dfx's Voodoo, and because 2D and 3D were on the same card, consumers didn't need to slot two cards at once like they did with the Voodoo.

In January 1998, NVIDIA shipped its one-millionth RIVA 128.

In March of the same year, NVIDIA signed a strategic partnership agreement with TSMC.

This point in time is worth pausing to look at—because it is the foreshadowing for Chapter 8. The NVIDIA of 1998 was still a small company that had just come back to life, and TSMC was not yet the irreplaceable entity it is today. But from this moment on, every single chip NVIDIA designed would be manufactured by TSMC. Twenty-six years later, NVIDIA's H100—the chip that drove the training of ChatGPT, Claude, and Gemini—still comes from TSMC's production lines. The starting point of this supply chain is in that 1998 contract.

And the reason that contract existed was because NVIDIA survived 1996.

The reason NVIDIA survived 1996 was because a Japanese man paid $5 million.

VI. The Interest on the Charity

The subsequent fate of Shoichiro Irimajiri forms a cruel contrast with the company he saved.

In 1998, he was promoted to President of Sega worldwide. Under his supervision, the Dreamcast adopted the PowerVR chip, a collaboration between NEC and VideoLogic—replacing the NV2 that NVIDIA had abandoned. The Dreamcast launched first at the end of 1998, featuring ahead-of-its-time online capabilities and stunning visuals.

But the PS2 arrived.

In March 2000, Sony's PlayStation 2 launched in Japan, with lines winding around several blocks. Ken Kutaragi's phrase "the home computer of the twenty-first century"—the press conference mentioned in the previous chapter that kept Gates awake—was becoming a reality. The PS2 sold over ten million units in its first year. Dreamcast sales began to plummet off a cliff.

In 2000, Shoichiro Irimajiri resigned from his position as President. Sega suffered losses for the third consecutive year.

In March 2001, Sega announced its exit from the console hardware market. Dreamcast production ceased. A company that had been making game consoles since 1983 left the hardware battlefield forever under the pressure of the PS2.

Before exiting, Sega sold the shares it held in NVIDIA. The stock originally bought for $5 million was sold for $15 million. With this money, Sega slightly alleviated the financial pressure of exiting the console business.

$15 million. A triple return. By venture capital standards, this was a good deal.

But if Sega hadn't sold—

In 2025, NVIDIA's market capitalization exceeds $3 trillion. That original $5 million investment, converted by share price, would be worth tens of billions of dollars.

Sega didn't wait for that day. It couldn't afford to wait.

VII. Closing Arguments

This chapter is not a story about investment foresight. It is a story about contingency.

Let's calculate this bill clearly—

Shoichiro Irimajiri gave NVIDIA $5 million in 1996. That money kept NVIDIA alive for six months. In those six months, NVIDIA created the RIVA 128, pivoted to triangle rendering, and tapped into the Direct3D ecosystem. In 1998, NVIDIA signed with TSMC. In 1999, the GeForce 256 was released—the industry's first chip to be called a "GPU." In 2006, Jensen Huang bet on CUDA, turning the GPU from a game engine into a general-purpose parallel computing platform. In 2012, AlexNet proved that deep learning needed GPUs. In 2022, the H100 drove the training of ChatGPT.

In the middle of this causal chain, there are countless nodes that could have broken. But the most fragile one—the one closest to going to zero—was the moment in 1996 when Shoichiro Irimajiri said "yes."

He wasn't making an investment decision. The company in front of him had failed products, a wrong technical roadmap, cash hitting rock bottom, and no deliverable results. Every number said "no." He said "yes" because—according to the consensus of all later reporting—he felt Jensen Huang was an honest man who deserved one more chance.

Here appears a variation of the pattern this book tracks.

The patterns in the previous two chapters were offensive: DirectX used convenience to attract developers, then used the lock-in effect to bind the ecosystem (Chapter 2); Xbox used losses to block the flank and drain the opponent's ambition (Chapter 3). These are the strategic actions of large corporations—rational, calculable, with ROI models.

The pattern in Chapter 4 is contingent: Before offensive lock-in can occur, someone must first survive. And surviving, sometimes, relies not on strategy, but on a single person making a decision in a single moment with no rational basis.

NVIDIA's subsequent CUDA monopoly—which will be expanded upon in Chapter 7—is textbook platform lock-in. But the prerequisite for the CUDA monopoly is that NVIDIA re-entered the triangle world in 1997, became the definer of the GPU in 1999, and had enough market position in 2006 to place a ten-year bet. And the prerequisite for all of this was a $5 million charity.

Business textbooks will tell you: monopoly is a systemic engineering project. Correct. But the first brick of a systemic engineering project is sometimes a person's intuition, a meal that shouldn't have been eaten, or a phone call that shouldn't have been made.

VIII. The Loot is Still Being Divided

Back to the machine learning engineer complaining about the H100 queue in 2025 at the beginning.

He is being choked by the throat by NVIDIA. The causal chain for this did not start with CUDA, did not start with GeForce, and didn't even start with the founding of NVIDIA. Its true starting point was a Japanese game company executive who, at the moment his own company was about to sink, threw his last life preserver to someone else.

The absurdity of this bill is: Sega signed a graphics card contract to build a game console; the contract failed, and Sega chose to pay rather than collect debt; that money saved a company; that company spent twenty years building the monopoly that today chokes the throat of the entire AI industry.

Is Sega a victim? Not entirely. It got a triple return, just sold too early. Is NVIDIA a beneficiary? Unquestionably. But the true victims are every AI researcher locked in by CUDA in 2025—they are paying forward interest on a charity from 1996.

And after Sega exited the console battlefield, the position it vacated was coincidentally filled by an American company. That company just made its appearance in the previous chapter—it used an NVIDIA chip to build a living room console running Windows.

The GPU inside the Xbox, codenamed NV2A, was based on the GeForce 3 architecture. The ancestor of the GeForce 3 was the RIVA 128. The RIVA 128 existed because of Shoichiro Irimajiri's $5 million.

The lines have connected.

But the lines aren't finished connecting. NVIDIA got another contract in 1999—this time from Microsoft. The GPU for the Xbox was supplied by NVIDIA. This contract gave NVIDIA its first taste of the console market, and also taught it its first lesson about the profit structure of console contracts—Microsoft demanded year-over-year price cuts, NVIDIA refused, and the two sides fell out. The Xbox 360 switched to an ATI chip.

NVIDIA thereafter exited the console GPU market, concentrating all its firepower on PC graphics cards.

This experience of being "forced out of the console market by Microsoft" paradoxically turned out to be NVIDIA's luck—because it forced Jensen Huang to ponder a question: Besides drawing games, what else can a GPU do?

The answer to that question was called CUDA. It will appear in Chapter 7.

But before arriving at Chapter 7, the story must first shift to another timeline. The DirectX lock-in discussed in Chapter 2 began producing a side effect Microsoft hadn't foreseen by the 2010s—people who needed freedom started leaving Windows. Scientific researchers, server administrators, and those who would later train AI models, all flocked to Linux.

And exactly while these people were flocking to Linux, Intel made a mistake as fatal as NVIDIA's NV1—only Intel didn't have a Shoichiro Irimajiri to save it.

The name of that mistake was Larrabee.